I got it wrong: the agent needs a brain

About a month ago I wrote about stopping coding. The short version: Claude Code was my only development tool. I wrote no code by hand, and spent most of my time specifying workflows, checking outputs, and deciding what to try next.

Since then I started working on ARC-AGI-2, and the agent got a brain. Thanks to Alessio and Raja for nudging me in that direction.

Between the brain and stronger Codex models, I can give the agent much more autonomy because memory no longer has to live in the startup file. Less for me to remember.

From One Big File to a Brain

Back then, my CLAUDE.md grew into a large operating manual.

But it also mixed two different things:

- instructions for how the agent should behave;

- documentation of what the project currently knew.

Those are not the same thing. Instructions should be stable. Documentation changes constantly.

The Claude Code docs are explicit about this: "target under 200 lines per CLAUDE.md file. Longer files consume more context and reduce adherence." Once I started packing in both rules and project history, the file became diluted. The agent could still read it, but the signal was mixed.

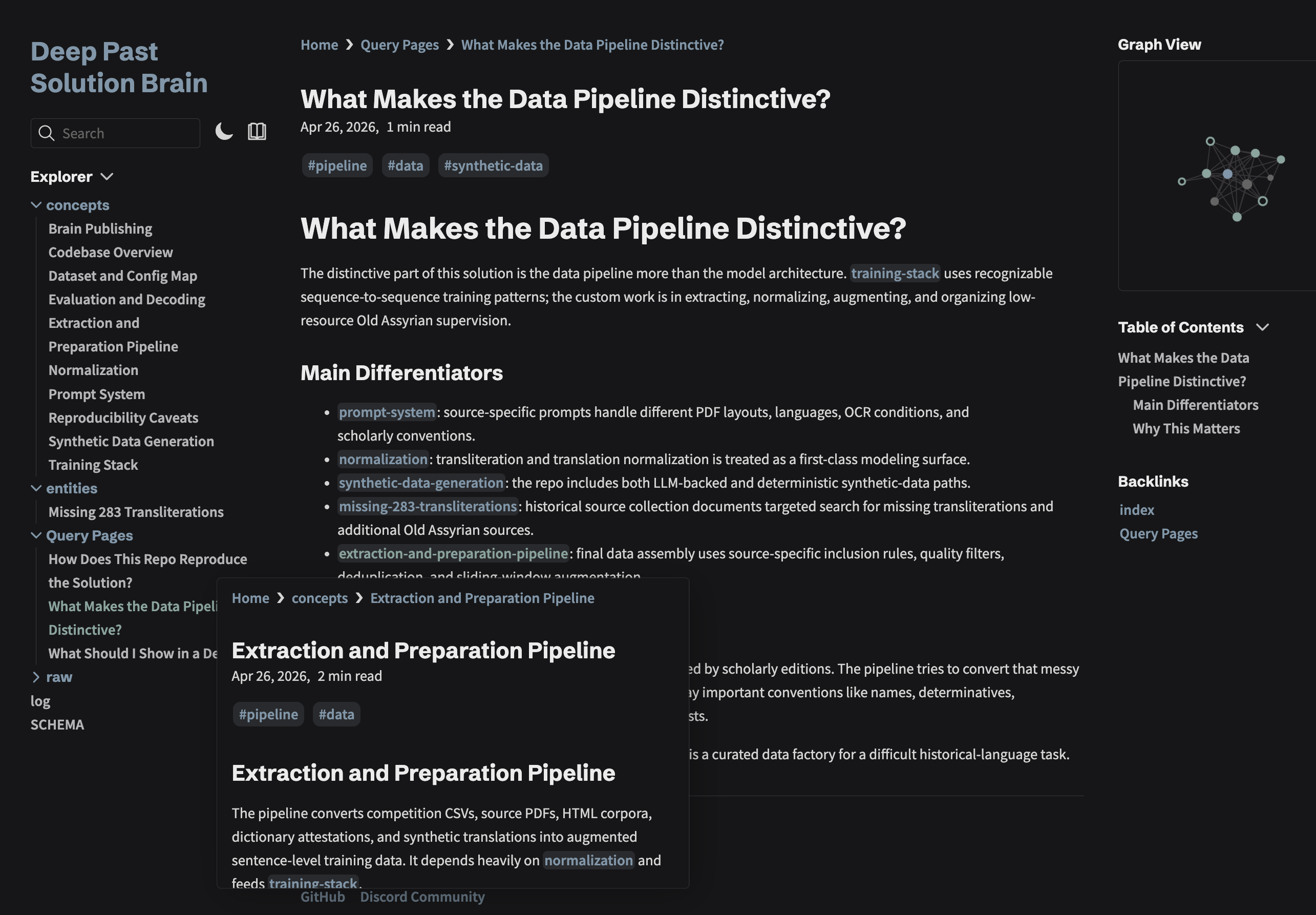

In ARC, I started using an llm-wiki style project brain much more seriously.

This came from Andrej Karpathy's LLM Wiki idea: instead of rebuilding context from raw documents every time, compile raw material into a persistent Markdown wiki. In practice, I use an llm-wiki skill to do that.

It is just markdown files in brain/: a schema, index, log, concept pages, entity pages, comparisons, proposals, and filed query answers.

The important part is the separation:

AGENTS.md: stable operating rules- Skills: repeatable procedures

brain/: project memory and synthesis- Scripts/configs: executable truth

AGENTS.md became the constitution. The brain became the library.

My actual prompt became much simpler:

"Look up the brain and tell me which params I trained this experiment with."

The current AGENTS.md is short and practical: secrets, data safety, common commands, normalization rules, and publishing hygiene.

The detailed explanation lives in the brain: extraction pipeline, prompt system, synthetic data, and query pages like "what makes this data pipeline distinctive?"

Skills Run. The Brain Remembers.

A skill answers: "How do I run this workflow?"

The brain answers: "Why are we running it, what happened last time, and what did we decide?"

The distinction matters. A skill tells the agent how to run a training smoke test; the brain can look up the previous tests from two weeks ago. I don't need a 6 page scratchpad of notes.

What Didn't Work

The brain can get stale.

That is the tradeoff. Once memory lives in files, it can accumulate old assumptions, abandoned ideas, and notes that were true for one experiment but wrong for the next. The agent is better at using the brain than judging what should be deleted from it.

This is where maintenance matters. The llm-wiki skill has a lint pass for exactly this: stale pages, contradictions, orphan notes, oversized pages, and tag drift. However, how deep and where to cut needs human guidance.

So the brain is not free memory. It is maintained memory.